The Conservatives have helpfully provided a model ‘bad survey’ for us to dissect.

(I don’t wish to pick on the Conservatives, they are hardly unique, but they have been promoting a lot of rather poor surveys on Facebook recently).

Obviously this isn’t actually a serious survey, it’s an attempt to generate propaganda stats (and harvest email addresses). But it’s a fun and instructive exercise to imagine it was a real survey.

Ok, where to start?

Long lists

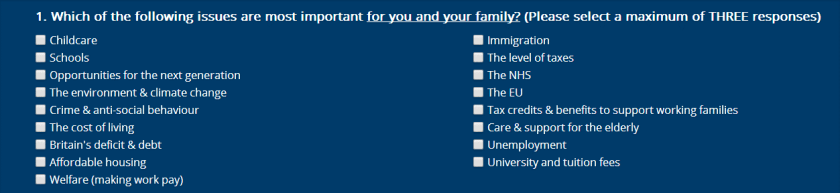

Questions 1 and 2 show respondents a big list of options, from which they can choose three items.

Fair enough, but we know that such lists suffer from a primacy effect – whereby the position of an item in a list significantly affects its probability of being selected. If you want respondents to choose an item, put it high on the list (or high in each column, in this case), or maybe at the very bottom. Not in the middle.

So it looks like the Conservatives might want us to care about childcare, immigration, schools, taxes, welfare etc. And perhaps they want us to forget about: the EU (‘let’s hope UKIP goes away and doesn’t split our party in two’) and Ed Miliband’s “cost of living crisis”. (Or maybe this is accidental, I don’t know – the point is they aint going to get good data).

But what if you really want respondents’ opinions on a large number of items? Well, one way to deal with that is to randomise the order of the items in the list (very easy to do with online surveys with large response rates) or better still, to present each respondent with a different randomly generated subset of responses (again easy to do). Accessing this survey from a few different browsers/devices/IP addresses shows they aren’t doing this.

Ambiguous items

The lists above includes things like “immigration” and the “level of taxes”. These items don’t seem very useful, since they don’t give any indication of the direction in which the respondent thinks the problem lies. Is immigration too high? Or is immigration to the UK too difficult? The Conservatives probably think the answer is obvious, but I personally believe the latter (and I’m in good Nobel Prize winning company today), as would many employers, people from ethnic minorities, or anybody with the temerity to have friends or colleagues in a non-EU country. Similarly many people think taxes (at least on the rich) are too low.

Overall, these questions have made the classic undergraduate mistake of trying to do too much in one survey question. If you actually wanted to know people’s views on all these issues, you’d want to ask a number of different questions.

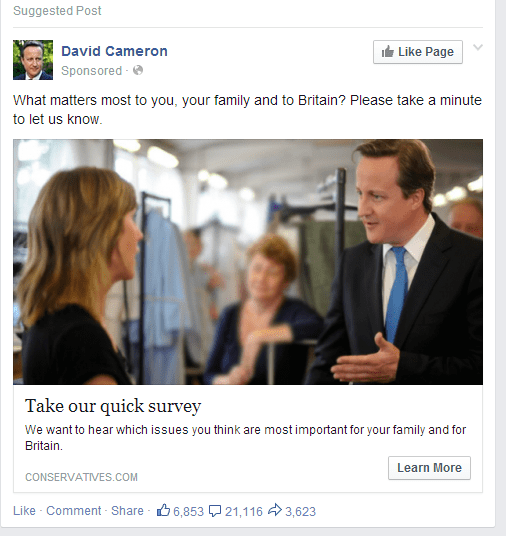

Biased sampling

Almost too obvious to mention. I don’t know how exactly these promoted Facebook posts work, but even assuming they go to a representative sample of people, promoting the survey with a personal message from David Cameron seems unlikely to yield an unbiased sample. If the conservatives really wanted to find out people’s views on these topics, they could have paid a reputable polling organisation to run the survey for them. Indeed, they do this all the time for their own private polling. But not when they want junk stats.

Agree/disagree

This old chestnut! Asking someone to agree or disagree with a statement is the classic leading question, well known to bias responses in favour of agreement. See here for a good explanation of this and other survey problems.

It would be easy to frame this as a neutral question, e.g.

How important a problem do you think the deficit is? (v important to very unimportant).

Finally, it’s unusual to have 11 responses (5 is usually fine for a Likert scale). I’m not sure what the rationale is behind distinguishing, say, 3 and 4?

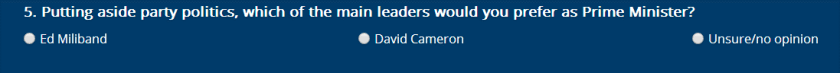

Non-comprehensive responses

The following isn’t necessarily a bad question, but the lack of a “no preference” or “neither, they’re both equally hopeless” type option will tend to force many people who have an opinion, to plump for one or the other (making it hard to interpret the data) rather than tick the “unsure/no opinion” option, which implies ignorance.

There’s no perfect solution to these problems (allowing ‘no preference’ type options gives respondents an easy ‘out’ when perhaps you’d like to force a decision). But two stage filtering questions do help.

Anonymity/bias

Perhaps worst of all, to submit the survey, you have to give your name and email address to the Conservative party. Asking for the respondent’s name is clearly unnecessary for this survey (they aren’t trying to do a panel survey) and will tend to exacerbate various biases. I’m sure they are fully compliant with the data protection act, but this seems guaranteed to restrict responses to people who are happy to receive emails from conservative party.

I look forward to seeing the results of this survey quoted at PM questions…